1 Scope

This document specifies an OPC UA Information Model for the representation of a machine vision system. OPC Machine Vision, part 1 aims at straightforward integration of a machine vision system into production control and IT systems. The scope is not only to complement or substitute existing interfaces between a machine vision system and its process environment by OPC UA, but also to create non-existent horizontal and vertical integration abilities to communicate relevant data to other authorized process participants, e.g. up to the IT enterprise level. To this end, the OPC Machine Vision interface allows for the exchange of information between a machine vision system and another machine vision system, a station PLC, a line controller, or any other software system in areas like MES, SCADA, ERP or data analytics systems.

2 Normative references

The following documents, in whole or in part, are normatively referenced in this document and are indispensable for its application. For dated references, only the edition cited applies. For undated references, the latest edition of the referenced document (including any amendments) applies.

OPC 10000-1, OPC Unified Architecture - Part 1: Overview and Concepts.

OPC 10000-1

OPC 10000-2, OPC Unified Architecture - Part 2: Security Model.

OPC 10000-2

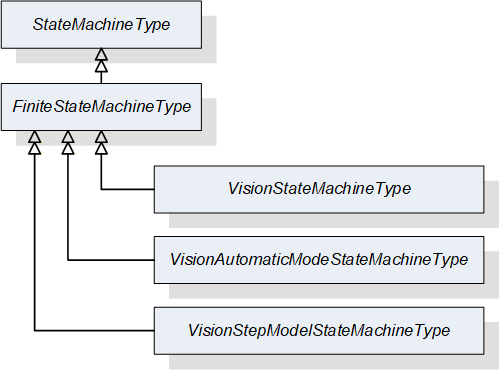

OPC 10000-3, OPC Unified Architecture - Part 3: Address Space Model.

OPC 10000-3

OPC 10000-4, OPC Unified Architecture - Part 4: Services.

OPC 10000-4

OPC 10000-5, OPC Unified Architecture - Part 5: Information Model.

OPC 10000-5

OPC 10000-6, OPC Unified Architecture - Part 6: Mappings.

OPC 10000-6

OPC 10000-7, OPC Unified Architecture - Part 7: Profiles.

OPC 10000-7

OPC 10000-9, OPC Unified Architecture - Part 9: Alarms & Conditions.

OPC 10000-9

SEMI E10-0312: SEMI E10 Standard: Specification for Definition and Measurement of Equipment Reliability, Availability, and Maintainability (RAM) and Utilization).

3 Terms, definitions and conventions

3.1 Terms

| Term | Definition of Term |

| Camera | Vision sensor that is capable of extracting information from electro-magnetic waves. |

| Client | Receiver of information. Requests services from a server, usually OPC Machine Vision system. |

| Configuration | Information stored in a configuration ensures that different vision systems generate equal results if same recipe is used. |

| Environment | The set of external entities working with the vision system in one way or another, e.g. PLC, MES, etc. |

| External | Not part of the vision system or the OPC UA server; may refer to the automation system, the manufacturing execution system or other entities |

| Job | The main purpose of a machine vision system is to execute jobs. Job may be a simple task such as measurement of a part’s diameter, or much more complex, like surface inspection of a long, continuous roll of a printing paper. |

| Machine Vision System | A system for machine vision is any complex information processing system / smart camera / vision sensor / other component which, in the production context, is capable of extracting information from electro-magnetic waves in accordance with a given image processing task. |

| Inline Machine Vision System | Denotes a machine vision system which is used in the manner of a system working continuously within a production line (hence the name). This can mean 100% quality inspection, as well as providing poses for robot-guidance for all parts or inspection of the entire area of a continuous material stream and other similar use cases. |

| Product | In an industrial environment a machine vision system is usually used to check products that are manufactured. The name of such a product is often used outside the machine vision system to reference recipes of the devices used to manufacture the product. This eliminates the need for the external production control systems to know the IDs of local recipes of each device. |

| Recipe | Properties, procedures and parameters that describe a machine vision job for the vision system are stored in a recipe. The actual content of the data structure is out of the scope of this specification. |

| Server | Information provider classified by the services it provides. Vision system commonly acts as OPC UA server. |

| State Machine | A finite-state machine (FSM) or simply a state machine, is a mathematical model of computation. It is an abstract machine that can be in exactly one of a finite number of states at any given time. The state machine can change from one state to another in response to some external inputs. The change from one state to another is called a transition. A state machine is defined by a list of its states, its initial state, and the conditions for each transition. |

| System-wide unique | Used in conjunction with identifiers and handles to denote that at any given time no other entity of the same type and meaning shall exist in the OPC UA server with the same value. No further assumptions about global or historical uniqueness are made; especially in the case of identifiers, however, globally unique identifiers are recommended. |

| Vision System | The underlying machine vision system for which the OPC UA server provides an abstracted view. |

| WebSocket | WebSocket is a computer communications protocol, providing full-duplex communication channels over a single TCP connection. |

3.2 Abbreviations

| Abbreviation | Definition of Abbreviation |

| AC | Alarm and Condition |

| BLOB | BLOB, a Binary Large Object is a collection of binary data stored as a single entity in a database management system. |

| DCS | DCS, a distributed control system is a computerised control system for a process or plant usually with a large number of control loops, in which autonomous controllers are distributed throughout the system, but there is central operator supervisory control. The DCS concept increases reliability and reduces installation costs by localising control functions near the process plant, with remote monitoring and supervision. |

| ERP | ERP, the Enterprise resource planning is the integrated management of core business processes, often in real-time and mediated by software and technology. |

| HMI | The user interface or human–machine interface is the part of the machine that handles the human–machine interaction. |

| HTTP | The Hypertext Transfer Protocol (HTTP) is an application protocol for distributed, collaborative, and hypermedia information systems. |

| ID | Identifer |

| MES | MES, manufacturing execution systems are computerized systems used in manufacturing, to track and document the transformation of raw materials to finished goods. MES provides information that helps manufacturing decision makers understand how current conditions on the plant floor can be optimized to improve production output. |

| PLC | PLC, a programmable logic controller, or programmable controller is an industrial digital computer which has been ruggedized and adapted for the control of manufacturing processes, such as assembly lines, or robotic devices, or any activity that requires high reliability control and ease of programming and process fault diagnosis. |

| PMS | PMS, the Product Manufacturing System is generally a non-critical system for manufacturing activities, as it establishes a communication with the board line systems that directly and physically handle production progress. |

| TCP/IP | The Internet protocol suite is the conceptual model and set of communications protocols used on the Internet and similar computer networks. It is commonly known as TCP/IP because the foundational protocols in the suite are the Transmission Control Protocol (TCP) and the Internet Protocol (IP). |

3.3 Conventions used in this document

3.3.1 Conventions for Node descriptions

Node definitions are specified using tables (see Table 4).

Attributes are defined by providing the Attribute name and a value, or a description of the value.

References are defined by providing the ReferenceType name, the BrowseName of the TargetNode and its NodeClass.

If the TargetNode is a component of the Node being defined in the table, the Attributes of the composed Node are defined in the same row of the table.

The DataType is only specified for Variables; “[number>]” indicates a single-dimensional array, for multi-dimensional arrays the expression is repeated for each dimension (e.g. [2][3] for a two-dimensional array). For all arrays the ArrayDimensions is set as identified by <number> values. If no <number> is set, the corresponding dimension is set to 0, indicating an unknown size. If no number is provided at all the ArrayDimensions can be omitted. If no brackets are provided, it identifies a scalar DataType and the ValueRank is set to the corresponding value (see OPC 10000-3). In addition, ArrayDimensions is set to null or is omitted. If it can be Any or ScalarOrOneDimension, the value is put into “{<value>}”, so either “{Any}” or “{ScalarOrOneDimension}” and the ValueRank is set to the corresponding value (see OPC 10000-3) and the ArrayDimensions is set to null or is omitted. Examples are given in Table 3.

| Notation | DataType | ValueRank | ArrayDimensions | Description |

| Int32 | Int32 | -1 | omitted or null | A scalar Int32. |

| Int32[] | Int32 | 1 | omitted or {0} | Single-dimensional array of Int32 with an unknown size. |

| Int32[][] | Int32 | 2 | omitted or {0,0} | Two-dimensional array of Int32 with unknown sizes for both dimensions. |

| Int32[3][] | Int32 | 2 | {3,0} | Two-dimensional array of Int32 with a size of 3 for the first dimension and an unknown size for the second dimension. |

| Int32[5][3] | Int32 | 2 | {5,3} | Two-dimensional array of Int32 with a size of 5 for the first dimension and a size of 3 for the second dimension. |

| Int32{Any} | Int32 | -2 | omitted or null | An Int32 where it is unknown if it is scalar or array with any number of dimensions. |

| Int32{ScalarOrOneDimension} | Int32 | -3 | omitted or null | An Int32 where it is either a single-dimensional array or a scalar. |

The TypeDefinition is specified for Objects and Variables.

The TypeDefinition column specifies a symbolic name for a NodeId, i.e. the specified Node points with a HasTypeDefinitionReference to the corresponding Node.

The ModellingRule of the referenced component is provided by specifying the symbolic name of the rule in the ModellingRule column. In the AddressSpace, the Node shall use a HasModellingRuleReference to point to the corresponding ModellingRuleObject.

If the NodeId of a DataType is provided, the symbolic name of the Node representing the DataType shall be used.

Nodes of all other NodeClasses cannot be defined in the same table; therefore only the used ReferenceType, their NodeClass and their BrowseName are specified. A reference to another part of this document points to their definition.

Table 4 illustrates the table. If no components are provided, the DataType, TypeDefinition and ModellingRule columns may be omitted and only a Comment column is introduced to point to the Node definition.

| Attribute | Value | ||||

| Attribute name | Attribute value. If it is an optional Attribute that is not set “--“ will be used. | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| ReferenceType name | NodeClass of the target Node. | BrowseName of the target Node. If the Reference is to be instantiated by the server, then the value of the target Node’s BrowseName is “--“. | DataType of the referenced Node, only applicable for Variables. | TypeDefinition of the referenced Node, only applicable for Variables and Objects. | Referenced ModellingRule of the referenced Object. |

| NOTE Notes referencing footnotes of the table content. | |||||

Components of Nodes can be complex that is containing components by themselves. The TypeDefinition, NodeClass, DataType and ModellingRule can be derived from the type definitions, and the symbolic name can be created as defined in Section 3.3.3.1. Therefore, those containing components are not explicitly specified; they are implicitly specified by the type definitions.

3.3.2 NodeIds and BrowseNames

3.3.2.1 NodeIds

The NodeIds of all Nodes described in this standard are only symbolic names. Annex B defines the actual NodeIds.

The symbolic name of each Node defined in this specification is its BrowseName, or, when it is part of another Node, the BrowseName of the other Node, a “.”, and the BrowseName of itself. In this case “part of” means that the whole has a HasProperty or HasComponentReference to its part. Since all Nodes not being part of another Node have a unique name in this specification, the symbolic name is unique.

The namespace for all NodeIds defined in this specification is defined in Table 200. The namespace for this NamespaceIndex is Server-specific and depends on the position of the namespace URI in the server namespace table.

Note that this specification not only defines concrete Nodes, but also requires that some Nodes shall be generated, for example one for each Session running on the Server. The NodeIds of those Nodes are Server-specific, including the namespace. But the NamespaceIndex of those Nodes cannot be the NamespaceIndex used for the Nodes defined in this specification, because they are not defined by this specification but generated by the Server.

3.3.2.2 BrowseNames

The text part of the BrowseNames for all Nodes defined in this specification is specified in the tables defining the Nodes. The NamespaceIndex for all BrowseNames defined in this specification is defined in Annex A.

If the BrowseName is not defined by this specification, a namespace index prefix like ‘0:EngineeringUnits’ or ‘2:DeviceRevision’ is added to the BrowseName. This is typically necessary if a Property of another specification is overwritten or used in the OPC UA types defined in this specification. Table 200 provides a list of namespaces and their indexes as used in this specification.

3.3.3 Common Attributes

3.3.3.1 General

The Attributes of Nodes, their DataTypes and descriptions are defined in OPC 10000-3. Attributes not marked as optional are mandatory and shall be provided by a Server. The following tables define if the Attribute value is defined by this specification or if it is server-specific.

For all Nodes specified in this specification, the Attributes named in Table 5 shall be set as specified in the table.

| Attribute | Value |

| DisplayName | The DisplayName is a LocalizedText. Each server shall provide the DisplayName identical to the BrowseName of the Node for the LocaleId “en”. Whether the server provides translated names for other LocaleIds is server-specific. |

| Description | Optionally a server-specific description is provided. |

| NodeClass | Shall reflect the NodeClass of the Node. |

| NodeId | The NodeId is described by BrowseNames as defined in 3.3.2.1. |

| WriteMask | Optionally the WriteMaskAttribute can be provided. If the WriteMaskAttribute is provided, it shall set all non-server-specific Attributes to not writable. For example, the DescriptionAttribute may be set to writable since a Server may provide a server-specific description for the Node. The NodeId shall not be writable, because it is defined for each Node in this specification. |

| UserWriteMask | Optionally the UserWriteMaskAttribute can be provided. The same rules as for the WriteMaskAttribute apply. |

| RolePermissions | Optionally server-specific role permissions can be provided. |

| UserRolePermissions | Optionally the role permissions of the current Session can be provided. The value is server-specifc and depend on the RolePermissionsAttribute (if provided) and the current Session. |

| AccessRestrictions | Optionally server-specific access restrictions can be provided. |

3.3.3.2 Objects

For all Objects specified in this specification, the Attributes named in Table 6 shall be set as specified in the table. The definitions for the Attributes can be found in OPC 10000-3.

| Attribute | Value |

| EventNotifier | Whether the Node can be used to subscribe to Events or not is server-specific. |

3.3.3.3 Variables

For all Variables specified in this specification, the Attributes named in Table 7 shall be set as specified in the table. The definitions for the Attributes can be found in OPC 10000-3.

| Attribute | Value |

| MinimumSamplingInterval | Optionally, a server-specific minimum sampling interval is provided. |

| AccessLevel | The access level for Variables used for type definitions is server-specific, for all other Variables defined in this specification, the access level shall allow reading; other settings are server-specific. |

| UserAccessLevel | The value for the UserAccessLevelAttribute is server-specific. It is assumed that all Variables can be accessed by at least one user. |

| Value | For Variables used as InstanceDeclarations, the value is server-specific; otherwise it shall represent the value described in the text. |

| ArrayDimensions | If the ValueRank does not identify an array of a specific dimension (i.e. ValueRank <= 0) the ArrayDimensions can either be set to null or the Attribute is missing. This behaviour is server-specific. If the ValueRank specifies an array of a specific dimension (i.e. ValueRank > 0) then the ArrayDimensionsAttribute shall be specified in the table defining the Variable. |

| Historizing | The value for the HistorizingAttribute is server-specific. |

| AccessLevelEx | If the AccessLevelExAttribute is provided, it shall have the bits 8, 9, and 10 set to 0, meaning that read and write operations on an individual Variable are atomic, and arrays can be partly written. |

3.3.3.4 VariableTypes

For all VariableTypes specified in this specification, the Attributes named in Table 8 shall be set as specified in the table. The definitions for the Attributes can be found in OPC 10000-3.

| Attributes | Value |

| Value | Optionally a server-specific default value can be provided. |

| ArrayDimensions | If the ValueRank does not identify an array of a specific dimension (i.e. ValueRank <= 0) the ArrayDimensions can either be set to null or the Attribute is missing. This behaviour is server-specific. If the ValueRank specifies an array of a specific dimension (i.e. ValueRank > 0) then the ArrayDimensionsAttribute shall be specified in the table defining the VariableType. |

3.3.3.5 Methods

For all Methods specified in this specification, the Attributes named in Table 9 shall be set as specified in the table. The definitions for the Attributes can be found in OPC 10000-3.

| Attributes | Value |

| Executable | All Methods defined in this specification shall be executable (ExecutableAttribute set to “True”), unless it is defined differently in the Method definition. |

| UserExecutable | The value of the UserExecutableAttribute is server-specific. It is assumed that all Methods can be executed by at least one user. |

4 General information on Machine Vision and OPC UA

4.1 Introduction to Machine Vision systems

Machine vision systems are immensely diverse. This specification is based on a conceptual model of what constitutes a machine vision system’s functionality. Making good use of the specification requires an understanding of this conceptual model. It will be touched only briefly in this section, more details can be found in Annex B.

A machine vision system is any computer system, smart camera, vision sensor or even any other component that has the capability to record and process digital images or videostreams for the shop floor or other industrial markets, typically with the aim of extracting information from this data.

Digital images or video streams represent data in a general sense, comprising multiple spatial dimensions (e.g. 1D scanner lines, 2D camera images, 3D point clouds, image sequences, etc.) acquired by any kind of imaging technique (e.g. visible light, infrared, ultraviolet, x-ray, radar, ultrasonic, virtual imaging etc.).

With respect to a specific machine vision task, the output of a machine vision system can be raw or pre-processed images or any image-based measurements, inspection results, process control data, robot guidance data, etc.

Machine vision therefore covers a very broad range of systems as well as of applications.

System types range from small sensors and smart cameras to multi-computer setups with diverse sensoric equipment.

Applications include identification (like DataMatrix code, bar code or character recognition), pose determination (e.g. for robot guidance), assembly checks, gauging up to very high accuracy, surface inspection, color identification, etc.

In industrial production, a machine vision system is typically acting under the control and supervision of a machine control system, usually a PLC. There are many variations to this setup, depending on the type of product to be processed, e.g. individual parts or reel material, the organization of production etc.

A common situation in the production of individual work pieces is that a PLC informs the machine vision system about the arrival of a new part by sending a start signal, then waits until the machine vision system has answered with a result of some kind, e.g. a quality information (passed/failed), a measurement value (size), a position information (x- and y-coordinates, rotation, possibly z-coordinate, or full pose in the case of a 3D system), and then continues processing the work piece based on the information given by the vision system. Traditionally, the interfaces used for communication between a PLC and a machine vision system are digital I/O, the various types of field buses and industrial Ethernet systems on the market and also simply Ethernet for the transmission of bulk data.

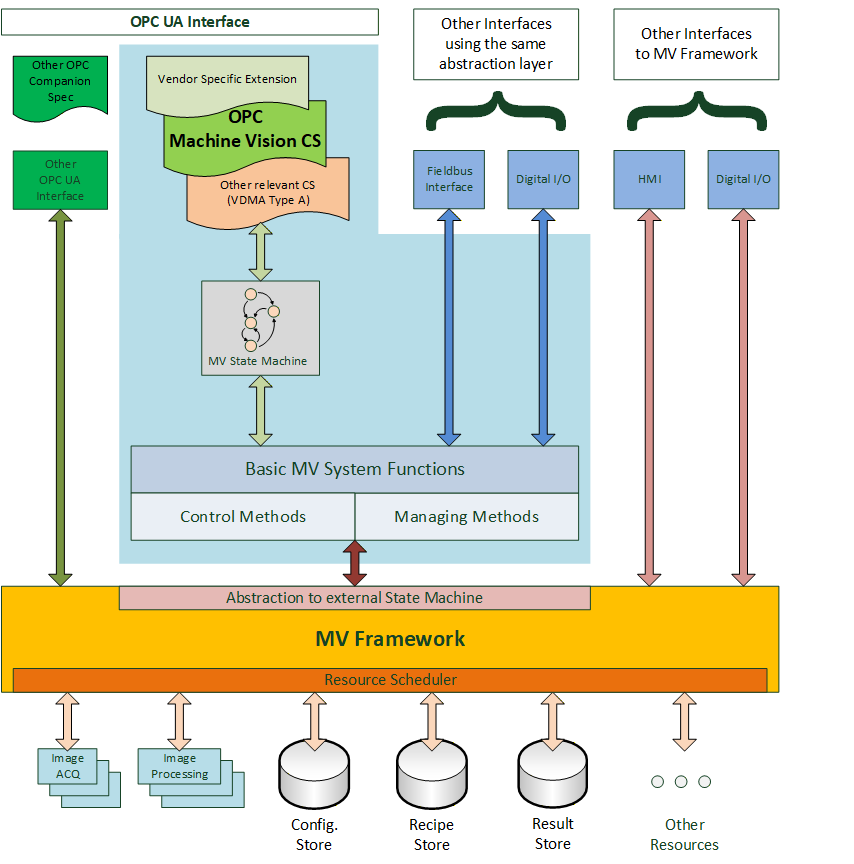

Figure 1 gives a generalized view on a machine vision system in the context of this companion specification. It assumes that there is some machine vision framework responsible for the acquisition and processing of the images. This framework is completely implementation specific to the system and is outside the scope of this companion specification.

This underlying system is currently presented to the “outside world”, e.g. the PLC, by various interfaces like digital I/O or field bus, typically using vendor specific protocol definitions. The interface described in this specification may co-exist with these interfaces and offer an additional view on the system or it may be used as the only interface to the system, depending on the requirements of the particular application.

The system may also be exposed through OPC UA interfaces according to other companion specifications, for example, DataMatrix code readers are by their nature machine vision systems but can also be exposed as systems adhering to the Auto ID specification. And system vendors can of course add their own OPC UA interfaces.

This companion specification provides a particular abstraction of a system envisioned to be running in an automated production environment where “automated” is meant in a very broad sense. A test bank for analyzing individual parts can be viewed as automated in that the press of a button by the operator starts the task of the machine vision system.

This abstraction may reflect the inner workings of the machine vision framework or it may be a layer on top of the framework presenting a view of it which is only loosely related to its interior construction.

The basic assumption of the model is that a machine vision system in a production environment goes through a sequence of states which are of interest to and may be influenced by the outside world.

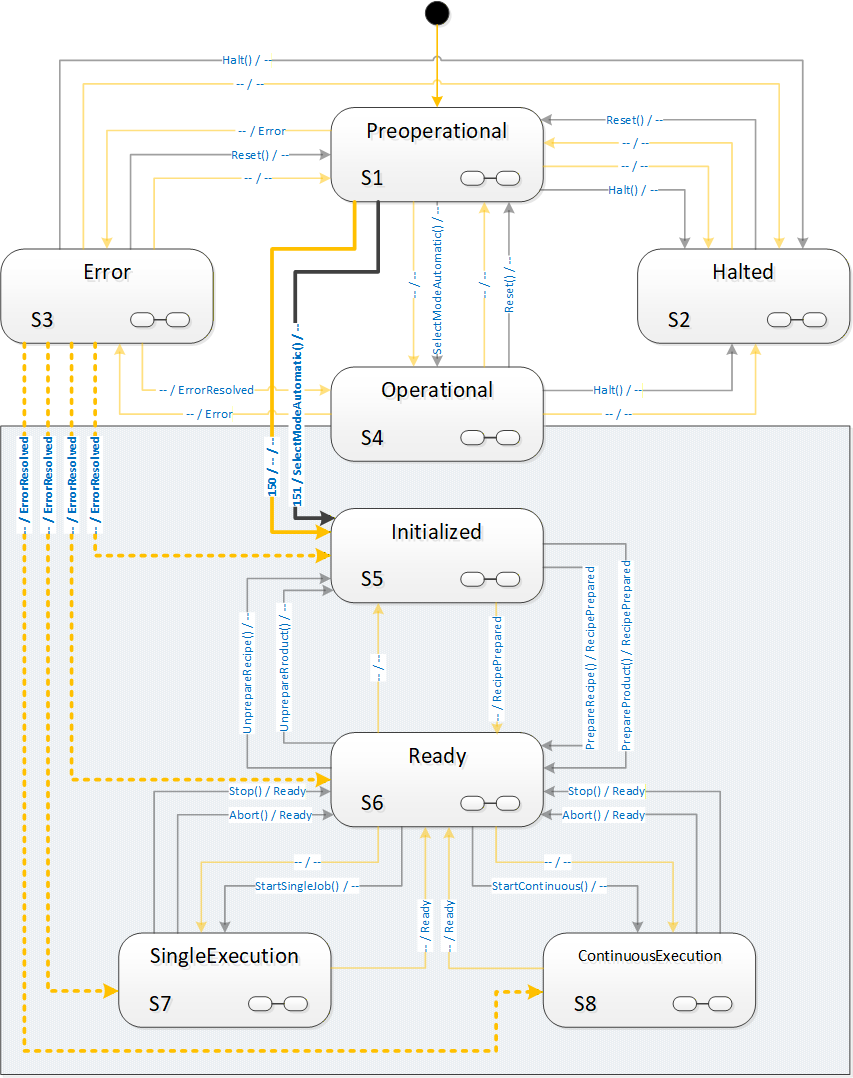

Therefore, a core element of this companion specification is a state machine view of the machine vision system.

Also, a machine vision system may require information from the outside world, in addition to the information it gathers itself by image acquisition, e.g. information about the type of product to be processed. And it will typically pass information to the outside world, e.g. results from the processing.

Therefore, in addition to the state machine, a set of methods and data types is required to allow for this flow of information. Due to the diverse nature of machine vision systems and their applications, these data types will have to allow for vendor- and application-specific extensions.

The intention of the state machine, the methods, as well as the data types, is to provide a framework allowing for standardized integration of machine vision systems into automated production systems, and guidance for filling in the application-specific areas.

Of course, vendors will always be able to extend this specification and provide additional services according to the specific capabilities of their systems and the particular applications.

4.2 Introduction to OPC Unified Architecture

4.2.1 What is OPC UA?

OPC UA is an open and royalty free set of standards designed as a universal communication protocol. While there are numerous communication solutions available, OPC UA has key advantages:

A state of art security model (see OPC 10000-2).

A fault tolerant communication protocol.

An information modelling framework that allows application developers to represent their data in a way that makes sense to them.

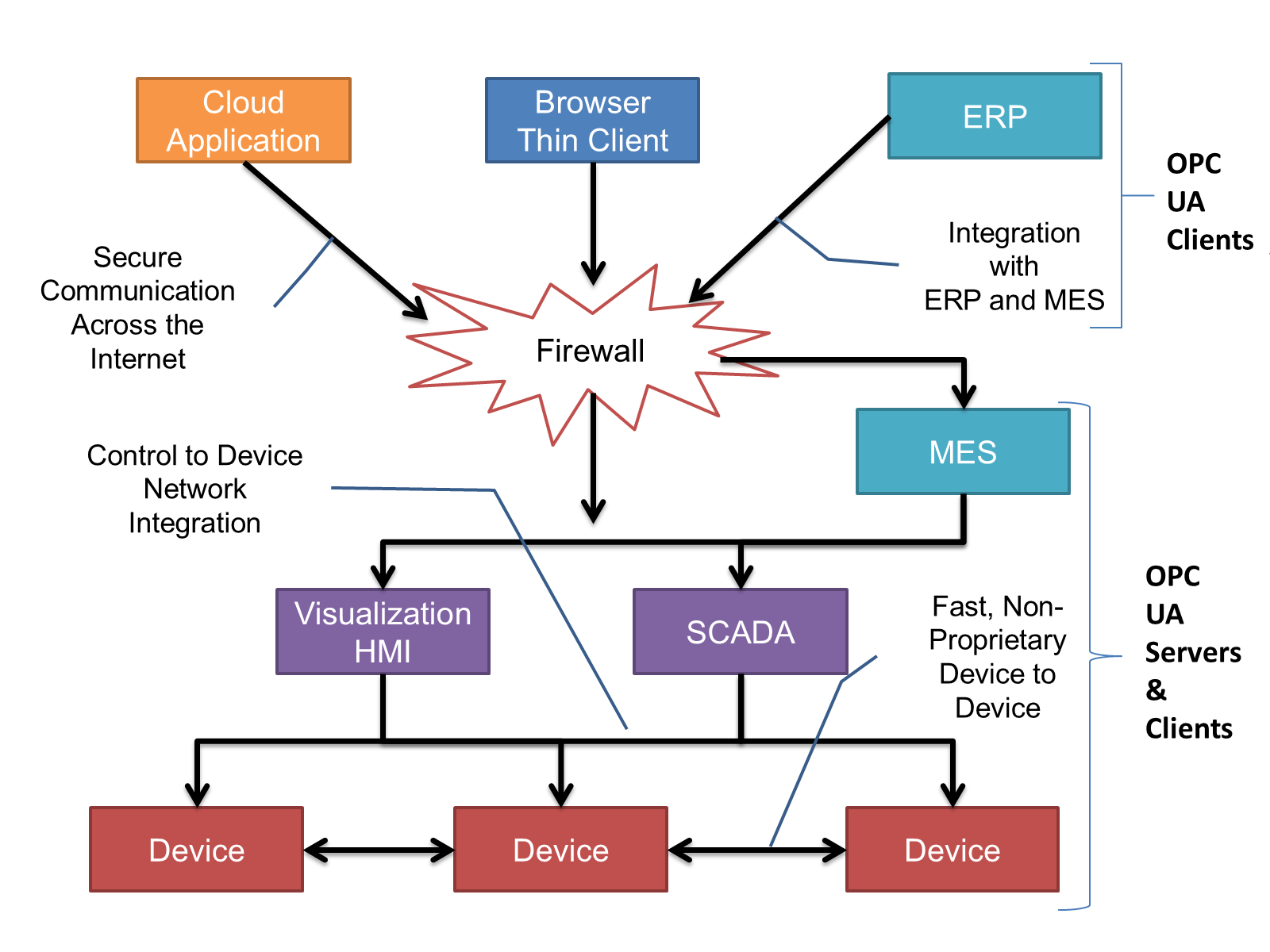

OPC UA has a broad scope which delivers for economies of scale for application developers. This means that a larger number of high quality applications at a reasonable cost are available. When combined with semantic models such as OPC Machine Vision, OPC UA makes it easier for end users to access data via generic commercial applications.

The OPC UA model is scalable from small devices to ERP systems. OPC UA Servers process information locally and then provide that data in a consistent format to any application requesting data - ERP, MES, PMS, Maintenance Systems, HMI, Smartphone or a standard Browser, for examples. For a more complete overview see OPC 10000-1.

4.2.2 Basics of OPC UA

As an open standard, OPC UA is based on standard internet technologies, like TCP/IP, HTTP, Web Sockets.

As an extensible standard, OPC UA provides a set of Services (see OPC 10000-4) and a basic information model framework. This framework provides an easy manner for creating and exposing vendor defined information in a standard way. More importantly all OPC UA Clients are expected to be able to discover and use vendor-defined information. This means OPC UA users can benefit from the economies of scale that come with generic visualization and historian applications. This specification is an example of an OPC UA Information Model designed to meet the needs of developers and users.

OPC UA Clients can be any consumer of data from another device on the network to browser based thin clients and ERP systems. The full scope of OPC UA applications is shown in Figure 2.

OPC UA provides a robust and reliable communication infrastructure having mechanisms for handling lost messages, failover, heartbeat, etc. With its binary encoded data, it offers a high-performing data exchange solution. Security is built into OPC UA as security requirements become more and more important especially since environments are connected to the office network or the internet and attackers are starting to focus on automation systems.

4.2.3 Information modelling in OPC UA

4.2.3.1 Concepts

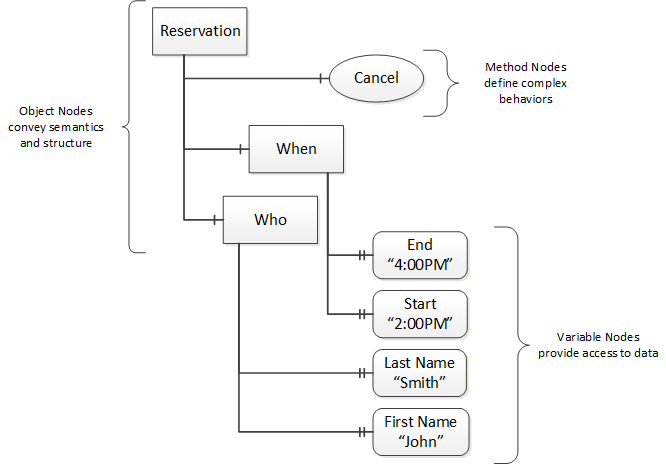

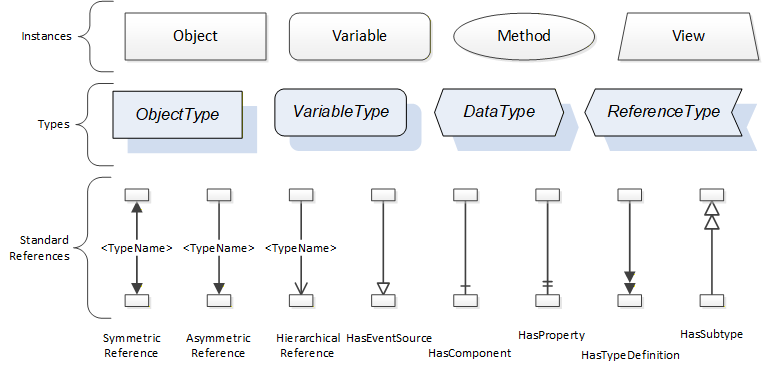

OPC UA provides a framework that can be used to represent complex information as Objects in an AddressSpace which can be accessed with standard services. These Objects consist of Nodes connected by References. Different classes of Nodes convey different semantics. For example, a Variable Node represents a value that can be read or written. The Variable Node has an associated DataType that can define the actual value, such as a string, float, structure etc. It can also describe the Variable value as a variant. A Method Node represents a function that can be called. Every Node has a number of Attributes including a unique identifier called a NodeId and non-localized name called as BrowseName. An Object representing a ‘Reservation’ is shown in Figure 3.

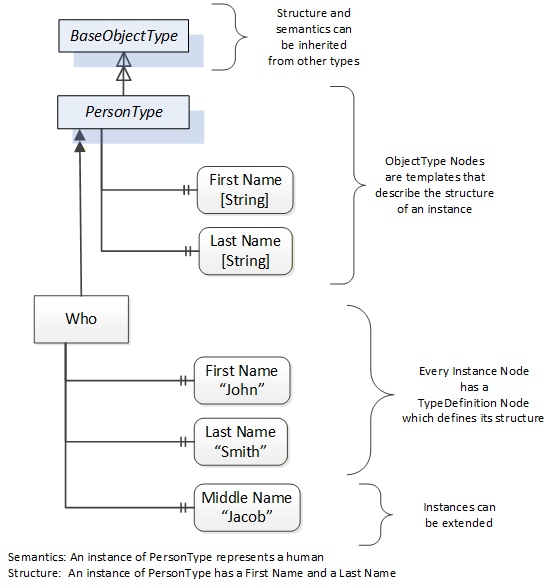

Object and Variable Nodes represent instances and they always reference a TypeDefinition (ObjectType or VariableType) Node which describes their semantics and structure. Figure 4 illustrates the relationship between an instance and its TypeDefinition.

The type Nodes are templates that define all of the children that can be present in an instance of the type. In the example in Figure 4 the PersonType ObjectType defines two children: First Name and Last Name. All instances of PersonType are expected to have the same children with the same BrowseNames. Within a type the BrowseNames uniquely identify the children. This means Client applications can be designed to search for children based on the BrowseNames from the type instead of NodeIds. This eliminates the need for manual reconfiguration of systems if a Client uses types that multiple Servers implement.

OPC UA also supports the concept of sub-typing. This allows a modeller to take an existing type and extend it. There are rules regarding sub-typing defined in OPC 10000-3, but in general they allow the extension of a given type or the restriction of a DataType. For example, the modeller may decide that the existing ObjectType in some cases needs an additional Variable. The modeller can create a subtype of the ObjectType and add the Variable. A Client that is expecting the parent type can treat the new type as if it was of the parent type. Regarding DataTypes, subtypes can only restrict. If a Variable is defined to have a numeric value, a sub type could restrict it to a float.

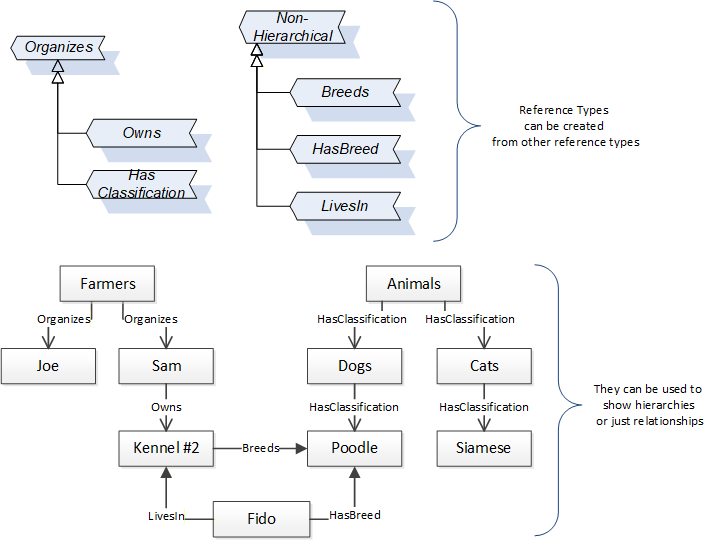

References allow Nodes to be connected in ways that describe their relationships. All References have a ReferenceType that specifies the semantics of the relationship. References can be hierarchical or non-hierarchical. Hierarchical references are used to create the structure of Objects and Variables. Non-hierarchical are used to create arbitrary associations. Applications can define their own ReferenceType by creating subtypes of an existing ReferenceType. Subtypes inherit the semantics of the parent but may add additional restrictions. Figure 5 depicts several References, connecting different Objects.

The figures above use a notation that was developed for the OPC UA specification. The notation is summarized in Figure 6. UML representations can also be used; however, the OPC UA notation is less ambiguous because there is a direct mapping from the elements in the figures to Nodes in the AddressSpace of an OPC UA Server.

A complete description of the different types of Nodes and References can be found in OPC 10000-3 and the base structure is described in OPC 10000-5.

OPC UA specification defines a very wide range of functionality in its basic information model. It is not expected that all Clients or Servers support all functionality in the OPC UA specifications. OPC UA includes the concept of Profiles, which segment the functionality into testable certifiable units. This allows the definition of functional subsets (that are expected to be implemented) within a companion specification. The Profiles do not restrict functionality, but generate requirements for a minimum set of functionality (see OPC 10000-7)

4.2.3.2 Namespaces

OPC UA allows information from many different sources to be combined into a single coherent AddressSpace. Namespaces are used to make this possible by eliminating naming and id conflicts between information from different sources. Namespaces in OPC UA have a globally unique string called a NamespaceUri and a locally unique integer called a NamespaceIndex. The NamespaceIndex is only unique within the context of a Session between an OPC UA Client and an OPC UA Server. The Services defined for OPC UA use the NamespaceIndex to specify the Namespace for qualified values.

There are two types of values in OPC UA that are qualified with Namespaces: NodeIds and QualifiedNames. NodeIds are globally unique identifiers for Nodes. This means the same Node with the same NodeId can appear in many Servers. This, in turn, means Clients can have built in knowledge of some Nodes. OPC UA Information Models generally define globally unique NodeIds for the TypeDefinitions defined by the Information Model.

QualifiedNames are non-localized names qualified with a Namespace. They are used for the BrowseNames of Nodes and allow the same names to be used by different information models without conflict. TypeDefinitions are not allowed to have children with duplicate BrowseNames; however, instances do not have that restriction.

4.2.3.3 Companion Specifications

An OPC UA companion specification for an industry specific vertical market describes an Information Model by defining ObjectTypes, VariableTypes, DataTypes and ReferenceTypes that represent the concepts used in the vertical market, and potentially also well-defined Objects as entry points into the AddressSpace.

5 Use cases

A vision system assesses situations automatically through machine vision and machine evaluation. This document describes how a vision system is addressed via OPC UA and integrated in a superordinate or a peer to peer structure. The description covers all aspects relevant for operation.

Interaction of the client with the vision system

A vision system usually has the role of an OPC UA server, i.e. its states are exposed via an OPC UA server. This is what in this specification is described and defined.

The client system can control the vision system via OPC UA. The vision system may also be controlled by a different entity through a different interface.

The vision system reports important events – such as evaluation results and error states – automatically to a subscribed client.

However, the client can query data from the vision system at any time.

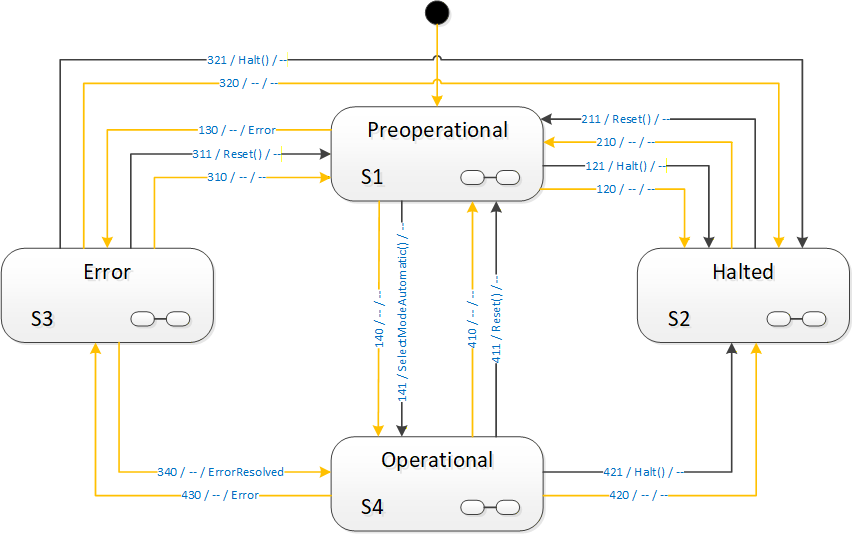

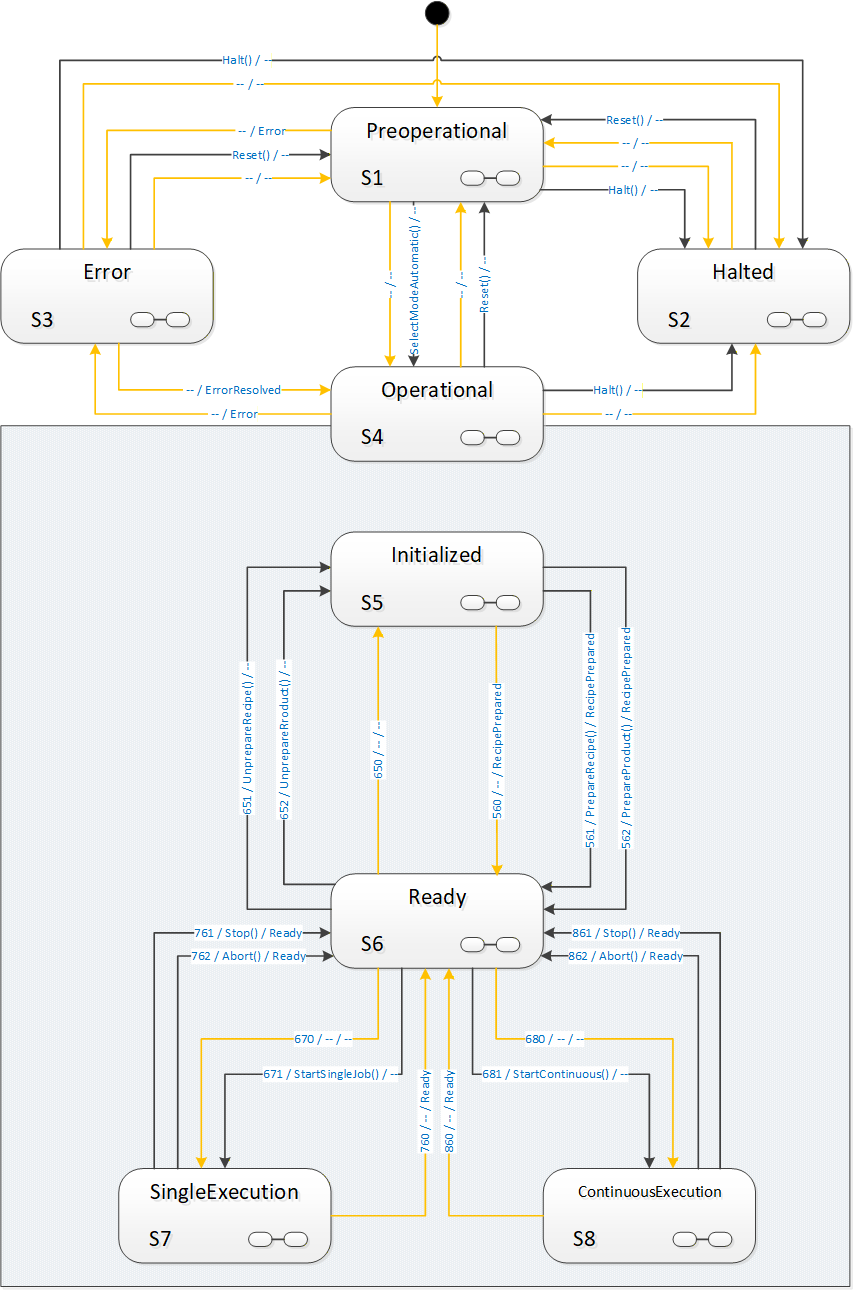

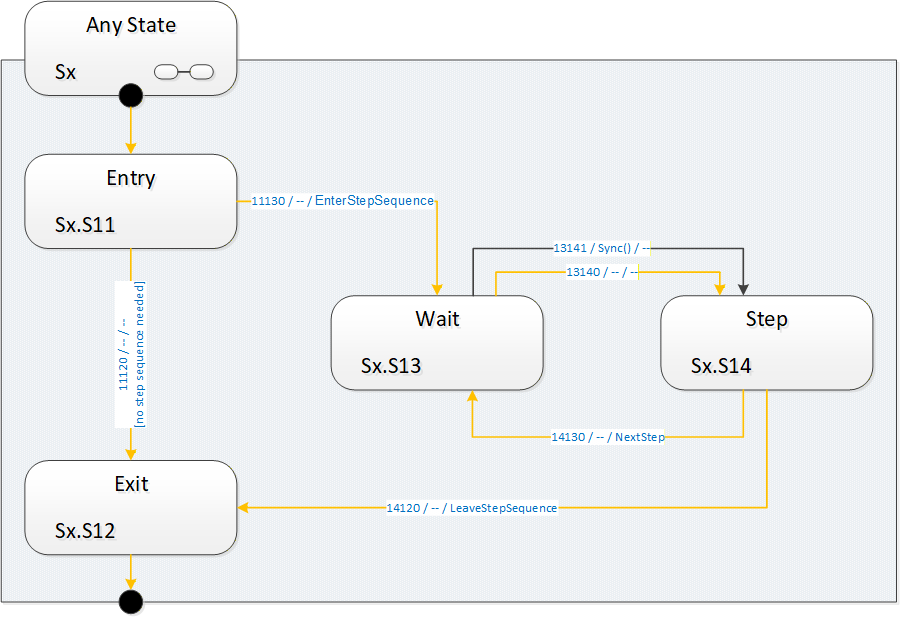

State Machine

The state machine model is an abstraction of a machine vision system, which maps the possible operational states of the machine vision system to a state model with a fixed number of states.

Each interaction of the client system with the vision system depends on the current state of the model and also the state and capabilities of the underlying vision system.

State changes are initiated by method calls from the client system or triggered by internal or external events. They may also be triggered by a secondary interface. Each state change is communicated to the client system.

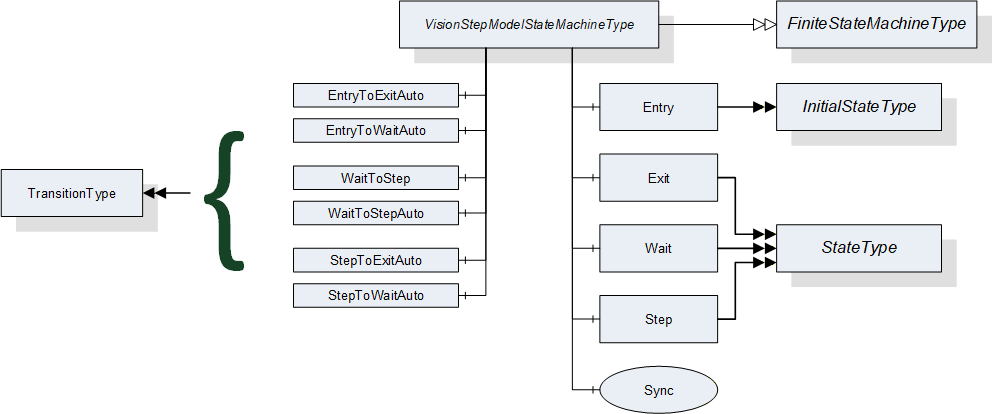

The state machine is described in more detail in Section 8.

Recipe Management

The properties, procedures and parameters that describe a machine vision task for the vision system are stored in a recipe.

Usually there are multiple usable recipes on a vision system.

This specification provides methods for activating, loading, and saving recipes.

Recipes are handled as binary objects. The interpretation of a recipe is not part of this specification.

For a detailed description of Recipe Management, please refer to B.1.

Result Transfer

The image processing results are transmitted to the client system asynchronously. This transmission includes information on product assignment, times, and statuses.

The detailed data format of a result is not included in this specification.

Error Management

There is an interface for error notification and interactive error management.

6 OPC Machine Vision information model overview

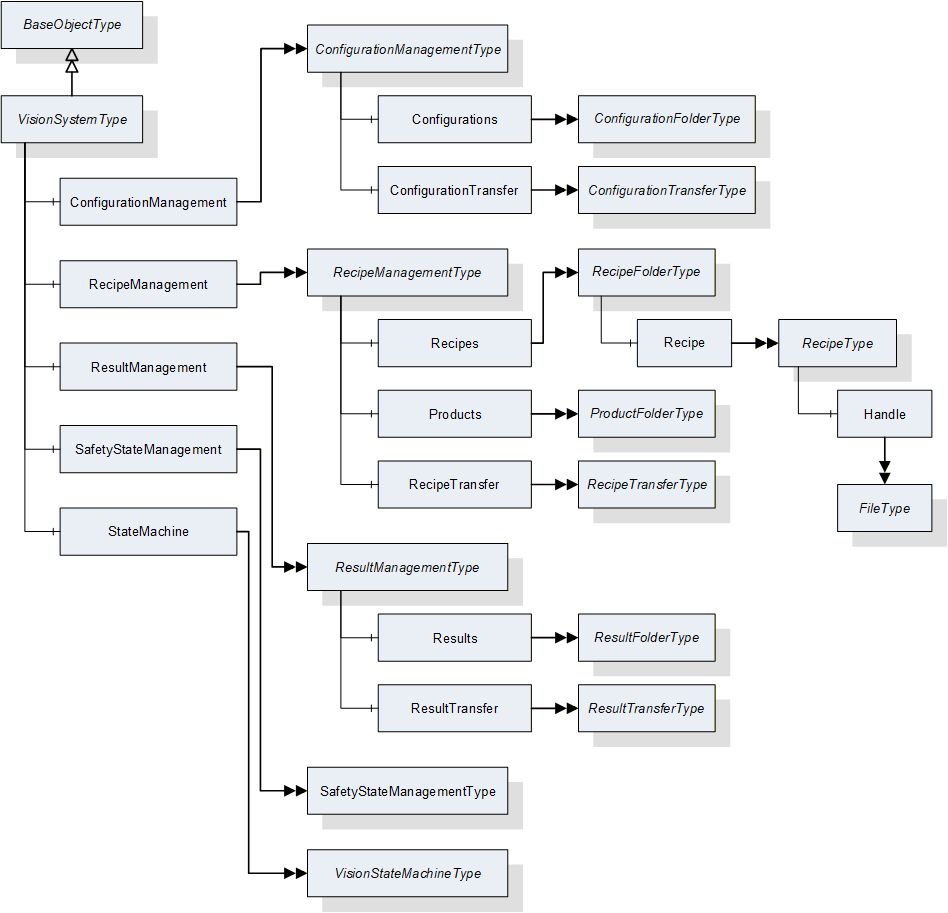

Figure 7 shows the main objects types and the relations between them.

7 ObjectTypes for the Vision System in General

7.1 VisionSystemType

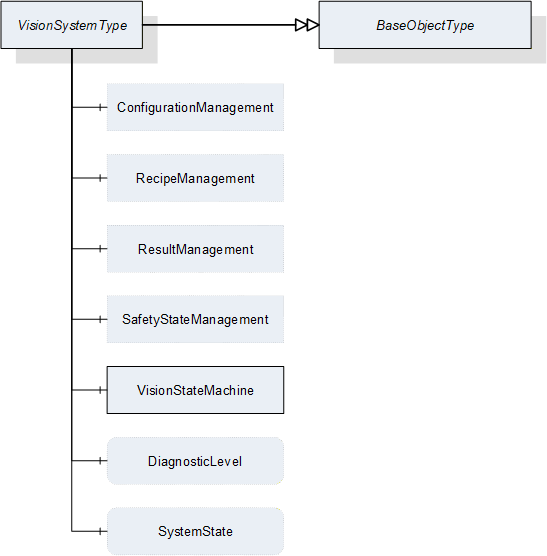

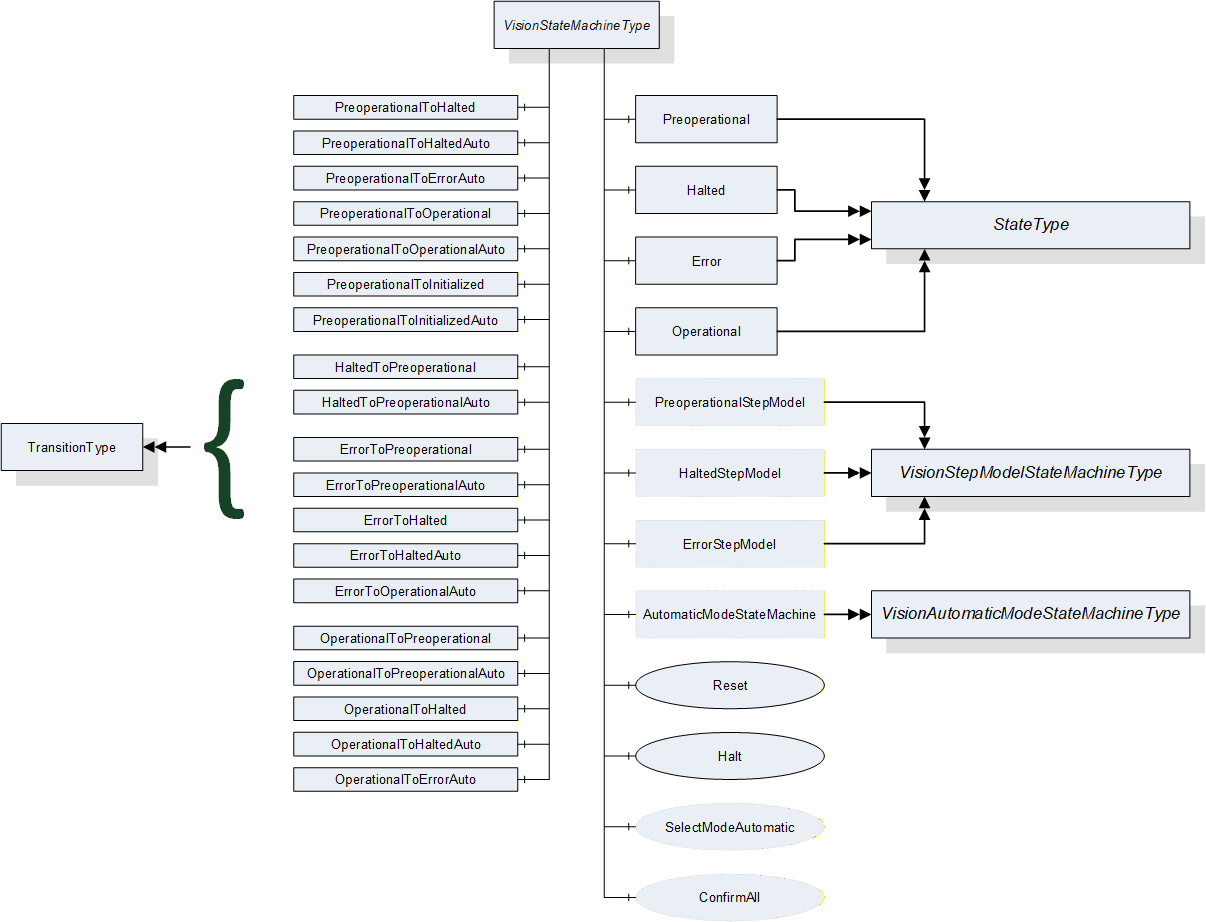

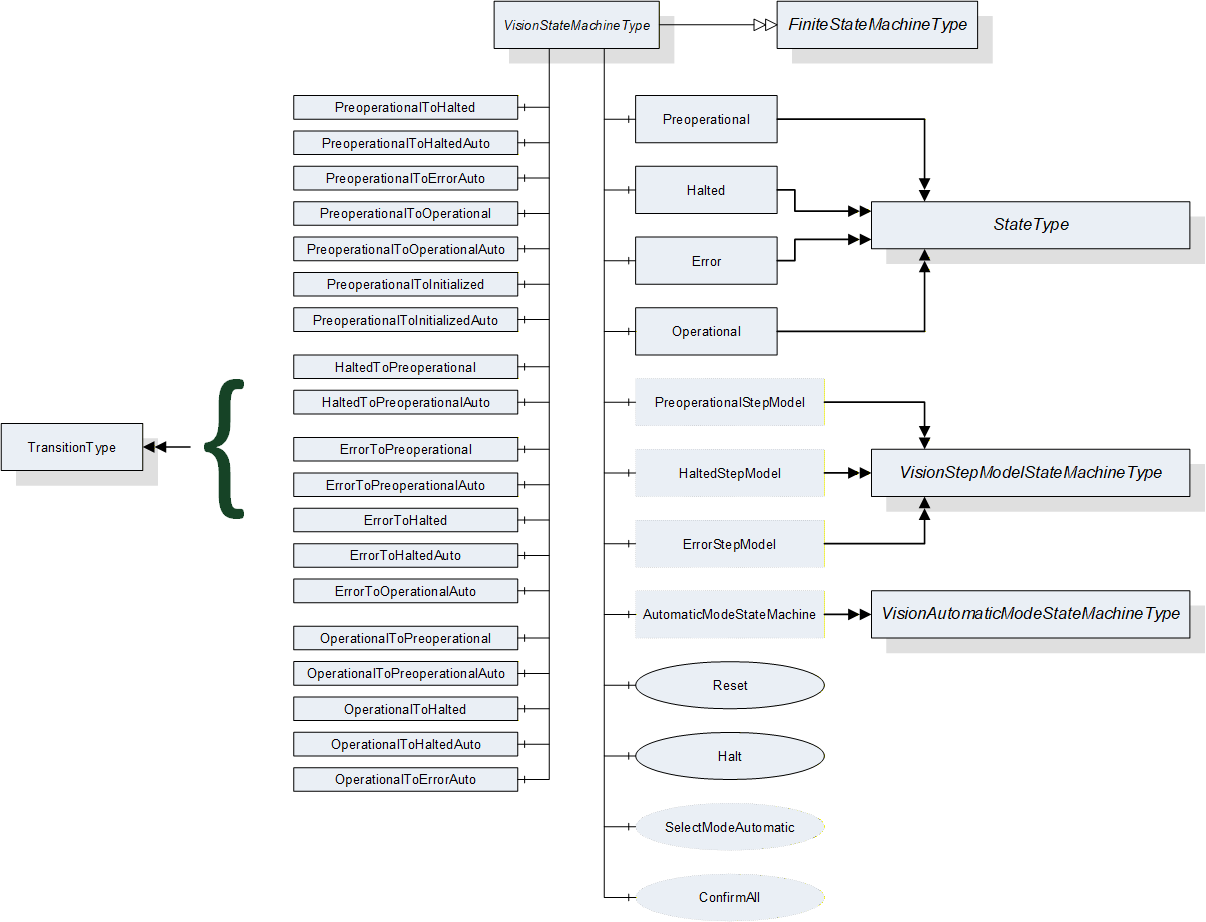

This ObjectType defines the representation of a machine vision system. Figure 8 shows the hierarchical structure and details of the composition. It is formally defined in Table 10.

Instances of this ObjectType provide a general communication interface for a machine vision system. This interface makes it possible to interact with this system independent of the knowledge of the internal structure and the underlying processes of the machine vision system.

System behavior is modeled with a mandatory hierarchical finite state machine.

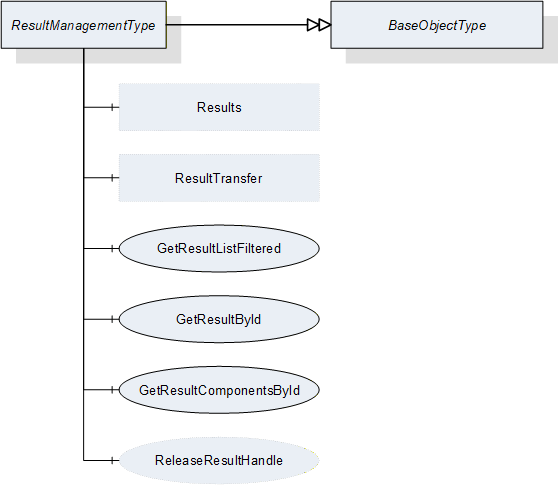

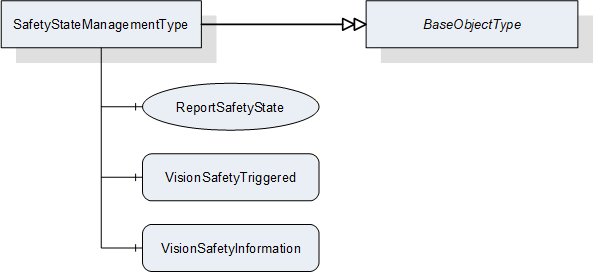

VisionSystemType contains four optional management objects, RecipeManagement, ConfigurationManagement, ResultManagement, and SafetyStateManagement. All of these provide access to the exposed functionality of the machine vision system.

| Attribute | Value | ||||

| BrowseName | VisionSystemType | ||||

| IsAbstract | False | ||||

| References |

Node

Class | BrowseName | DataType | TypeDefinition |

Modelling

Rule |

|---|---|---|---|---|---|

| Subtype of the BaseObjectType defined in OPC 10000-5 | |||||

| HasComponent | Object | ConfigurationManagement | -- | ConfigurationManagementType | Optional |

| HasComponent | Object | RecipeManagement | -- | RecipeManagementType | Optional |

| HasComponent | Object | ResultManagement | -- | ResultManagementType | Optional |

| HasComponent | Object | SafetyStateManagement | -- | SafetyStateManagementType | Optional |

| HasComponent | Object | VisionStateMachine | -- | VisionStateMachineType | Mandatory |

| HasComponent | Variable | DiagnosticLevel | UInt16 | BaseDataVariableType | Optional |

| HasComponent | Variable | SystemState | SystemStateDescriptionDataType | BaseDataVariableType | Optional |

ConfigurationManagement providesConfigurationManagement provides methods and properties required for Section 7.2.

RecipeManagement provides functionality to add, remove, prepare, and retrieve vision system recipes. RecipeManagementType is described in Section 7.5.

ResultManagement provides methods and properties necessary for managing the results. ResultManagementType is described in Section 7.10.

SafetyStateManagement provides functionality to inform the vision system about the change of an external safety state. SafetyStateManagementType is described in Section 7.13.

StateMachine provides information about the current state of the vision system and methods for controlling it. VisionStateMachineType is defined in Section 8.2.

DiagnosticLevel specifies the threshold for the severity of diagnostic messages to be generated by the server. More information can be found in Section 11.3.

SystemState represents the system state in terms of the SEMI E10 standard. More information can be found in Section11.6.

7.2 ConfigurationManagementType

7.2.1 Overview

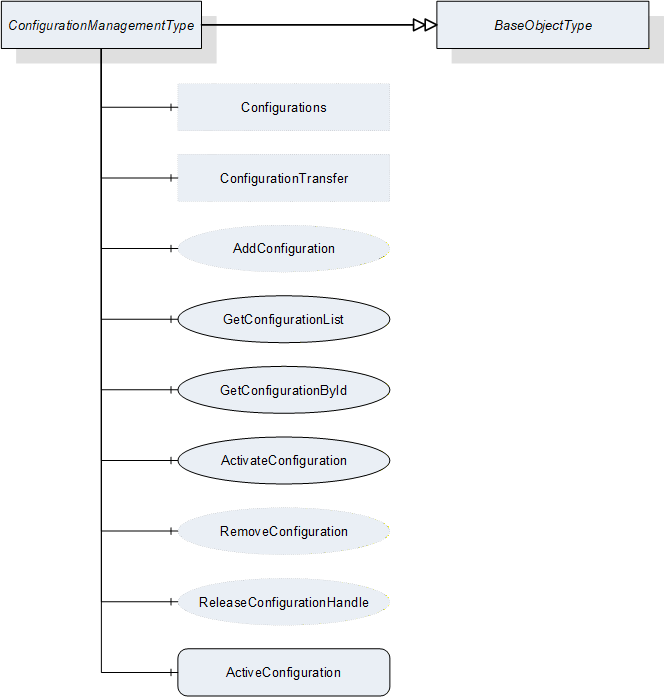

This ObjectType defines the representation of the machine vision system configuration management. Figure 9 shows the hierarchical structure and details of the composition. It is formally defined in Table 11.

Even supposedly identical vision systems will differ in some details. In order to produce the same results the vision systems have to be adjusted individually e.g. calibrated. Within this document, the set of all parameters that are needed to get the system working is called a configuration. Configurations can be used to align different vision systems that have the same capabilities, so that these systems produce the same results for the same recipes.

Instances of this ObjectType handle all configurations that are exposed by the system. Only one configuration can be active at a time. This active configuration affects all recipes used in the machine vision system. The configurations can optionally also be exposed in a folder, in order to provide access to the client.

Configurations are handled as files, meta data of configurations can be directly viewed but not changed by the client. The interpretation of the configuration’s content is not part of this specification.

| Attribute | Value | ||||

| BrowseName | ConfigurationManagementType | ||||

| IsAbstract | False | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| Subtype of the BaseObjectType defined in OPC 10000-5 | |||||

| HasComponent | Object | ConfigurationTransfer | -- | ConfigurationTransferType | Optional |

| HasComponent | Object | Configurations | -- | ConfigurationFolderType | Optional |

| HasComponent | Method | AddConfiguration | -- | -- | Optional |

| HasComponent | Method | GetConfigurationList | -- | -- | Mandatory |

| HasComponent | Method | GetConfigurationById | -- | -- | Mandatory |

| HasComponent | Method | ReleaseConfigurationHandle | -- | -- | Optional |

| HasComponent | Method | RemoveConfiguration | -- | -- | Optional |

| HasComponent | Method | ActivateConfiguration | -- | -- | Mandatory |

| HasComponent | Variable | ActiveConfiguration | ConfigurationDataType | BaseDataVariableType | Mandatory |

ConfigurationTransfer is an instance of the ConfigurationTransferType defined in Section 7.4 and it is used to transfer the contents of a configuration by the temporary file transfer method defined in OPC 10000-5, Annex C.4.

Configurations is an instance of the ConfigurationFolderType and it is used to organize variables of DataType ConfigurationDataType which is defined in Section 12.9. If the server chooses to expose configuration information in the Address Space, the Object may contain the set of all configurations available on the system. This is implementation-defined. If a server does not expose configuration information in the Address Space, this Object is expected to be non-existent.

The DataTypes used in the ConfigurationManagementType are defined in OPC 10000-5 and in Section 11.6 of this specification.

7.2.2 ConfigurationManagementType methods

7.2.2.1 AddConfiguration

7.2.2.1.1 Overview

This method is used to add a configuration to the configuration management of the vision system. It concerns itself only with the metadata of the configuration, the actual content is transferred by an object of ConfigurationTransferType which is defined in Section 7.4.

The intended behavior of this method for different input arguments is described in the following subsections.

Signature

AddConfiguration ([in] ConfigurationIdDataType externalId[out] ConfigurationIdDataType internalId[out] NodeId configuration[out] Boolean transferRequired[out] Int32 error);

| Argument | Description |

| externalId | Identification of the configuration used by the environment. This argument must not be empty. |

| internalId | System-wide unique ID for identifying a configuration. This ID is assigned by the vision system. |

| configuration | If the server chooses to represent the configuration in the Address Space, it shall return the NodeId of the newly created entry in the Configurations variable here. If the server uses only method-based configuration management, this shall be a null NodeId as defined in OPC 10000-3. |

| transferRequired | In this argument, the server returns whether the vision system assumes that a transfer of the file content of the configuration is required. Note that this is only a hint for the client. If the server returns TRUE, the client will have to assume that the vision system needs the configuration content and shall transfer it. If the server returns FALSE, the client may transfer the configuration content anyway. |

| error | 0 – OK Values > 0 are reserved for errors defined by this and future standards. Values < 0 shall be used for application-specific errors. |

| Attribute | Value | ||||

| BrowseName | AddConfiguration | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

7.2.2.1.2 New ExternalId

If AddConfiguration is called with an ExternalId not yet existing in the configuration management of the vision system, it is expected that the vision system creates an appropriate management structure with an InternalId which is unique on the system. The server then returns this InternalId.

If the server chooses to represent all or selected configurations in the Address Space and if the new configuration matches the current selection criteria, the server shall create a new entry in the Configurations folder in the Address Space.

The method will return TRUE in the TransferRequired argument. Since the ExternalId does not yet exist in the configuration management of the vision system, it is expected the configuration does not yet exist either in the local configuration storage of the vision system, and therefore needs to be transferred.

7.2.2.1.3 Identically Existing ExternalId with identical configuration

If AddConfiguration is called with an ExternalId already existing in the configuration management of the vision system, it is expected that the vision system checks whether an identical version of the configuration already exists, provided that the content of the ExternalId allows for such a check. (A way to perform this comparison without having to download the binary content first is offered by the optional hash value in the ExternalId. The idea is that the client computes a hash for the contents of the recipe and passes that hash in the ExternalId. The server can then check this hash against a hash transmitted earlier, or it can compute a hash by itself over the contents of the recipe currently stored on the vision system side. For this procedure, the server needs to know the hash algorithm used by the client which can be transmitted in the hashAlgorithm member of the ExternalId).

Note that the method has no way of checking this with the actual configuration content which is not yet known to the vision system.

The method will return FALSE in the TransferRequired argument if the method comes to the conclusion that the configuration already exists with identical content on the vision system. Note that the result is not binding for the client who may decide to transfer the configuration content anyway.

If the server represents configurations in the Address Space, no new entry shall be created in the configurations folder.

7.2.2.1.4 Identically Existing ExternalId with different configuration

If AddConfiguration comes to the conclusion that the content of the configuration to be transferred is different from the content already existing for this ExternalId, it shall return TRUE in the TransferRequired argument.

The behavior with respect to the management of the configuration metadata and configuration content is entirely application-defined. The vision system may decide to create a new management structure and add the configuration content to the local configuration store, or it may decide to re-use the existing ExternalId and overwrite the configuration content. In any case, the vision system shall create a new, system-wide unique InternalId for this configuration.

If the server chooses to represent configurations in the Address Space, the behavior with respect to these objects should mirror the behavior of the vision system in its internal configuration management.

7.2.2.1.5 Local creation or editing of configurations

This is not, strictly speaking, a use case of the method AddConfiguration, but results are comparable, and therefore the use case is described here.

If a configuration is created locally on the vision system or is loaded onto the vision system by a different interface than the OPC Machine Vision interface, i.e. the configuration is added without using method AddConfiguration, then this configuration shall have a system-wide unique InternalId, just like a configuration added through the method.

If an existing configuration which was uploaded to the vision system through the method AddConfiguration, is locally changed, the ExternalId shall be removed from the changed version and it shall receive a new system-wide unique InternalId so that the two configurations cannot be confused. The vision system may record the history from which configuration it was derived.

If the server exposes configurations in the Address Space and if the locally created or edited configurations match the current filter criteria, then they shall be represented as nodes in the Configurations folder, with their system-wide unique InternalIds, but without ExternalIds.

7.2.2.2 GetConfigurationById

This method is used to get the metadata for one configuration from a number of configurations.

Signature

GetConfigurationById ([in] ConfigurationIdDataType internalId[in] Int32 timeout[out] Handle configurationHandle[out] ConfigurationDataType configuration[out] Int32 error);

| Argument | Description |

| internalId | Identification of the configuration used by the vision system. This argument must not be empty. |

| Timeout | With this argument the client can give a hint to the server how long it will need access to the configuration data. A value > 0 indicates an estimated maximum time for processing the data in milliseconds. A value = 0 indicates that the client will not need anything besides the data returned by the method call. A value < 0 indicates that the client cannot give an estimate. The client cannot rely on the data being available during the indicated time period. The argument is merely a hint allowing the server to optimize its resource management. |

| configurationHandle | The client can use the handle returned by the server to call the ReleaseConfigurationHandle method to indicate to the server that it has finished processing the configuration data, allowing the server to optimize its resource management. If the server does not support the ReleaseConfigurationHandle method, this value shall be 0. The client cannot rely on the data being available until ReleaseConfigurationHandle is called. |

| configuration | Requested configuration. |

| error | 0 – OK Values > 0 are reserved for errors defined by this and future standards. Values < 0 shall be used for application-specific errors. |

| Attribute | Value | ||||

| BrowseName | GetConfigurationById | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

7.2.2.3 GetConfigurationList

This method is used to get a list of all configurations. It concerns itself only with the metadata of the configuration, the actual content is transferred by a ConfigurationTransferType object.

Signature

GetConfigurationList ([in] UInt32 maxResults[in] UInt32 startIndex[in] Int32 timeout[out] Boolean isComplete[out] UInt32 resultCount[out] Handle configurationHandle[out] ConfigurationDataType[] configurationList[out] Int32 error);

| Argument | Description |

| maxResults | Maximum number of configurations to return in one call; by passing 0, the client indicates that it does not put a limit on the number of configurations. |

| startIndex | Shall be 0 on the first call, multiples of maxResults on subsequent calls to retrieve portions of the entire list, if necessary. |

| timeout | With this argument the client can give a hint to the server how long it will need access to the configuration data. A value > 0 indicates an estimated maximum time for processing the data in milliseconds. A value = 0 indicates that the client will not need anything besides the data returned by the method call. A value < 0 indicates that the client cannot give an estimate. The client cannot rely on the data being available during the indicated time period. The argument is merely a hint allowing the server to optimize its resource management. |

| isComplete | Indicates whether there are more configurations in the entire list than retrieved according to startIndex and resultCount. |

| resultCount | Gives the number of valid results in configurationList. |

| configurationHandle | The server shall return to each client requesting configuration data a system-wide unique handle identifying the configuration set / client combination. The handle spans continuation calls, so on every call by the same client where startIndex is not 0, the same handle shall be returned. This handle canbe used by the client in a call to the ReleaseConfigurationHandle method, thereby indicating to the server that it has finished processing the configuration set, allowing the server to optimize its resource management. The client cannot rely on the data being available until the ReleaseConfigurationHandle method is called. If the server does no support ReleaseConfigurationHandle, this value shall be 0. |

| configurationList | List of configurations. |

| error | 0 – OK Values > 0 are reserved for errors defined by this and future standards. Values < 0 shall be used for application-specific errors. |

| Attribute | Value | ||||

| BrowseName | GetConfigurationList | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

The following cases must be considered with the respect to the number of available configurations:

The number of configurations to be returned is less or equal to maxResults; the first call, with startIndex=0, returns isComplete=TRUE, so the client knows that no further calls are necessary. resultCount gives the number of valid elements in the configurationList array.

The number of configurations to be returned is larger than maxResults; the first N calls (N > 0 with N ≤ (number of configurations) divisor MaxResults), with startIndex=(N-1)*maxResults, return isComplete=FALSE, so the client knows that further calls are necessary. The following call returns isComplete=TRUE, so the client knows, no further calls are necessary. resultCount gives the number of valid elements in the configurationList array (on each call, so on the first N calls, this should be maxResults).

7.2.2.4 ReleaseConfigurationHandle

This method is used to inform the server that the client has finished processing a given configuration set allowing the server to free resources managing this configuration set.

The server should keep the data of the configuration set available for the client until the ReleaseConfigurationHandle method is called or until a timeout given by the client has expired. However, the server is free to release the data at any time, depending on its internal resource management, so the client cannot rely on the data being available. ReleaseConfigurationHandle is merely a hint allowing the server to optimize its internal resource management. For timeout usage, see the description in Section 7.2.2.2.

Signature

ReleaseConfigurationHandle ([in] Handle configurationHandle[out] Int32 error);

| Argument | Description |

| configurationHandle | Handle returned by GetConfigurationById or GetConfigurationList, identifying the configuration set/client combination. |

| error | 0 – OK Values > 0 are reserved for errors defined by this and future standards. Values < 0 shall be used for application-specific errors. |

| Attribute | Value | ||||

| BrowseName | ReleaseConfigurationHandle | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

7.2.2.5 RemoveConfiguration

This method is used to remove a configuration from the configuration management of the vision system.

Application Note:

It may be required from a vision system – e.g. in pharmaceutical or other safety-critical applications – to keep a record of the prior existence of a removed configuration. This may be important in such systems for the meta information of results that were generated while the removed configuration was active. It serves to keep it comprehensible which configurations were available on the vision system

Signature

RemoveConfiguration ([in] ConfigurationIdDataType internalId[out] Int32 error);

| Argument | Description |

| internalId | Identification of the configuration used by the vision system. This argument must not be empty. |

| error | 0 – OK Values > 0 are reserved for errors defined by this and future standards. Values < 0 shall be used for application-specific errors. |

| Attribute | Value | ||||

| BrowseName | RemoveConfiguration | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

7.2.2.6 ActivateConfiguration

This method is used to activate a configuration from the configuration management of the vision system.

Since only a single configuration can be active at any time, this method shall deactivate any other configuration which may currently be active. From that point on until the next call to this method the vision system will conduct its operation according to the settings of the activated configuration.

Note that there is no way to deactivate a configuration except by activating another one to avoid having no active configuration on the system.

Signature

ActivateConfiguration ([in] ConfigurationIdDataType internalId[out] Int32 error);| Argument | Description |

| internalId | Identification of the configuration used by the vision system. This argument must not be empty. |

| error | 0 – OK Values > 0 are reserved for errors defined by this and future standards. Values < 0 shall be used for application-specific errors. |

| Attribute | Value | ||||

| BrowseName | ActivateConfiguration | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

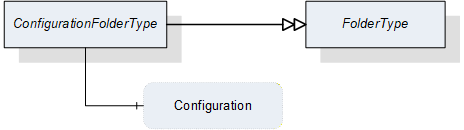

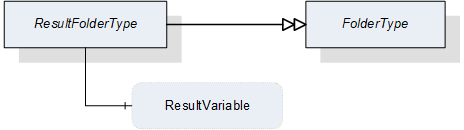

7.3 ConfigurationFolderType

This ObjectType is a subtype of the FolderType and is used to organize the configurations of a vision system. Figure 10 shows the hierarchical structure and details of the composition. It is formally defined in Table 24.

Instances of this ObjectType organize all available configurations of the vision system, which the server decides to expose in the Address Space. It may contain no configuration if no configuration is available, if the server does not expose configurations in the Address Space at all, or if no configuration matches the criteria of the server for exposure in the Address Space.

Note that the folder contains only metadata of the configurations, not the actual configuration data of the vision system.

| Attribute | Value | ||||

| BrowseName | ConfigurationFolderType | ||||

| IsAbstract | False | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| Subtype of the FolderType defined in OPC 10000-5 | |||||

| HasComponent | Variable | <Configuration> | ConfigurationDataType | BaseDataVariableType | OptionalPlaceholder |

The ConfigurationDataType used in the ConfigurationFolderType is defined in Section 12.12.

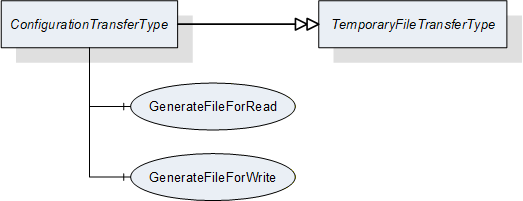

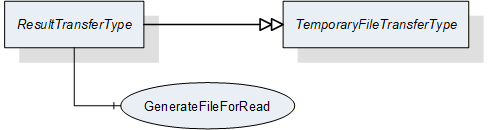

7.4 ConfigurationTransferType

7.4.1 Overview

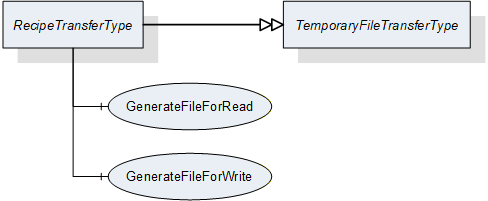

This ObjectType is a subtype of the TemporaryFileTransferType defined in OPC 10000-5 and is used to transfer configuration data as a file.

The ConfigurationTransferType overwrites the Methods GenerateFileForRead and GenerateFileForWrite to specify the concrete type of the generateOptions Parameter of the Methods.

Figure 11 shows the hierarchical structure and details of the composition. It is formally defined in Table 25.

| Attribute | Value | ||||

| BrowseName | ConfigurationTransferType | ||||

| IsAbstract | False | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| Subtype of the TemporaryFileTransferType defined in OPC 10000-5 | |||||

| HasComponent | Method | 0:GenerateFileForRead | -- | -- | Mandatory |

| HasComponent | Method | 0:GenerateFileForWrite | -- | -- | Mandatory |

7.4.2 ConfigurationTransferType methods

7.4.2.1 GenerateFileForRead

This method is used to start the read file transaction. A successful call of this method creates a temporary FileType Object with the file content and returns the NodeId of this Object and the file handle to access the Object.

Signature

GenerateFileForRead ([in] ConfigurationTransferOptions generateOptions[out] NodeId fileNodeId[out] UInt32 fileHandle[out] NodeId completionStateMachine);

| Argument | Description |

| generateOptions | The structure used to define the generate options for the file. |

| fileNodeId | NodeId of the temporary file |

| fileHandle | The FileHandle of the opened TransferFile. The FileHandle can be used to access the TransferFile methods Read and Close. |

| completionStateMachine | If the creation of the file is completed asynchronously, the parameter returns the NodeId of the corresponding FileTransferStateMachineType Object. If the creation of the file is already completed, the parameter is null. If a FileTransferStateMachineType object NodeId is returned, the Read Method of the file fails until the TransferState changed to ReadTransfer. |

| Attribute | Value | ||||

| BrowseName | GenerateFileForRead | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

7.4.2.2 GenerateFileForWrite

This method is used to start the write file transaction. A successful call of this method creates a temporary FileType Object with the file content and returns the NodeId of this Object and the file handle to access the Object.

Signature

GenerateFileForWrite ([in] ConfigurationTransferOptions generateOptions[out] NodeId fileNodeId[out] UInt32 fileHandle);| Argument | Description |

| generateOptions | The structure used to define the generate options for the file. |

| fileNodeId | NodeId of the temporary file. |

| fileHandle | The fileHandle of the opened TransferFile. The fileHandle can be used to access the TransferFile methods Write and CloseAndCommit. |

| Attribute | Value | ||||

| BrowseName | GenerateFileForWrite | ||||

| References | NodeClass | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

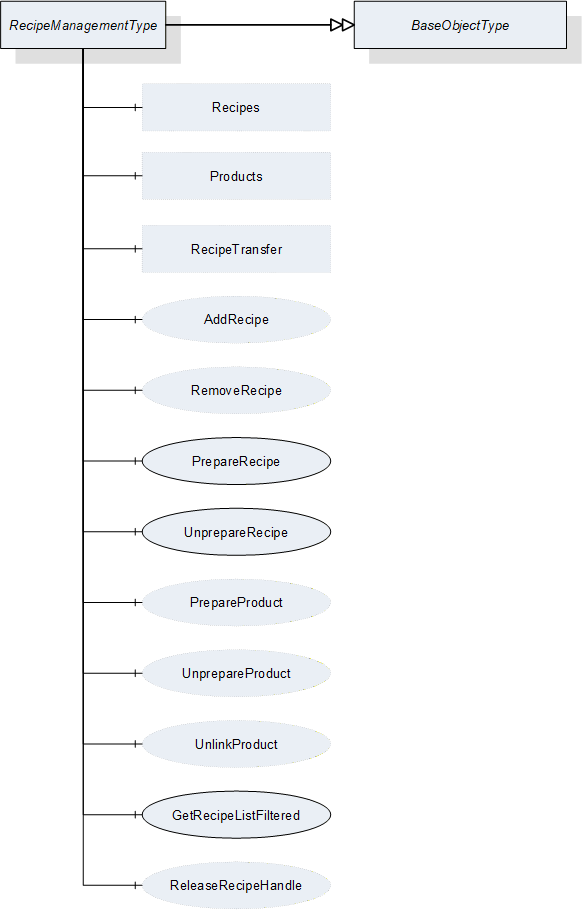

7.5 RecipeManagementType

7.5.1 Overview

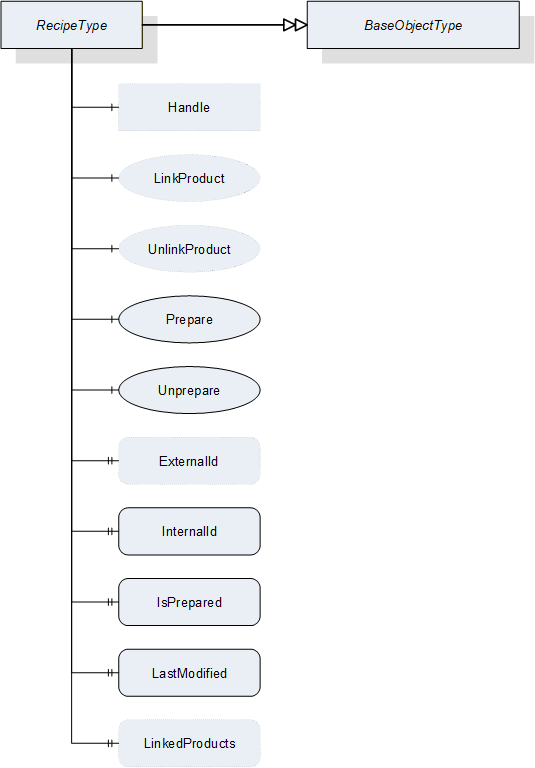

This ObjectType defines the representation of the machine vision system recipe management (for a conceptual overview of recipe management see Section B.1, for a definition of recipes itself, see Section B.1.2.1). Figure 12 shows the hierarchical structure and details of the composition. It is formally defined in Table 30.

For the actual data transfer, RecipeManagementType makes use of the RecipeTransferType, derived from the TemporaryFileTransferType defined in OPC 10000-5, beginning with version 1.04.

If the server chooses to expose recipe data in the Address Space (see Section B.1.3.3) using the Recipes folder of this type, the FileType object component of the RecipeType objects in this folder can also be used directly for the data transfer.

| Attribute | Value | ||||

| BrowseName | RecipeManagementType | ||||

| IsAbstract | False | ||||

| References | Node Class | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| Subtype of the BaseObjectType defined in OPC 10000-5 | |||||

| HasComponent | Method | AddRecipe | -- | -- | Optional |

| HasComponent | Method | PrepareRecipe | -- | -- | Mandatory |

| HasComponent | Method | UnprepareRecipe | -- | -- | Mandatory |

| HasComponent | Method | GetRecipeListFiltered | -- | -- | Mandatory |

| HasComponent | Method | ReleaseRecipeHandle | -- | -- | Optional |

| HasComponent | Method | RemoveRecipe | -- | -- | Optional |

| HasComponent | Method | PrepareProduct | -- | -- | Optional |

| HasComponent | Method | UnprepareProduct | -- | -- | Optional |

| HasComponent | Method | UnlinkProduct | -- | -- | Optional |

| HasComponent | Object | RecipeTransfer | -- | RecipeTransferType | Optional |

| HasComponent | Object | Recipes | -- | RecipeFolderType | Optional |

| HasComponent | Object | Products | -- | ProductFolderType | Optional |

RecipeTransfer is an instance of the RecipeTransferType defined in Section 7.6 and it is used to transfer the contents of a recipe by the temporary file transfer method defined in OPC 10000-5, Annex C.4.

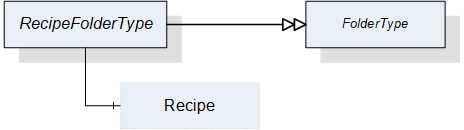

Recipes is an instance of the RecipeFolderType that organizes RecipeType objects, if the server chooses to expose recipe information in the Address Space. In this case, it may contain the set of all recipes available on the system or a filtered subset, e.g. the set of all currently prepared recipes. This is implementation-defined. If a server does not expose recipe information in the Address Space, this folder is expected to be non-existent.

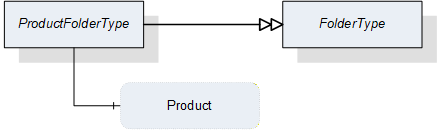

Products is an instance of the ProductFolderType that organizes ProductDataType variables, if the server chooses to expose product information in the Address Space. In this case, it may contain the set of all products available on the system or a filtered subset, e.g. the set of all products for which recipes are currently prepared. This is implementation-defined. If a server does not expose product information in the Address Space, this folder is expected to be non-existent.

7.5.2 RecipeManagementType Methods

7.5.2.1 AddRecipe

7.5.2.1.1 Overview

This method is used to add a recipe to the recipe management of the vision system. It concerns itself only with the metadata of the recipe, the actual content is transferred by a RecipeTransferType object.

The intended behavior of this method for different input arguments is described in the following subsections.

Signature

AddRecipe ([in] RecipeIdExternalDataType externalId[in] ProductIdDataType productId[out] RecipeIdInternalDataType internalId[out] NodeId recipe[out] NodeId product[out] Boolean transferRequired[out] Int32 error);| Argument | Description |

| externalId | Identification of the recipe used by the environment. This argument must not be empty. |

| productId | Identification of a product the recipe is to be used for. This argument may be empty. |

| internalId | Internal identification of the recipe. This identification shall be system-wide unique and must be returned. |

| recipe | If the server chooses to represent the recipe in the Address Space, it shall return the NodeId of the newly created entry in the Recipes folder here. If the server uses only method-based recipe management, this shall be null. Note that, even if the server uses the Recipes folder to expose recipe data in the Address Space, this may be empty, if the newly created recipe does not fit the selection criteria of the server for the entries in this folder. |

| product | If the server chooses to represent product information in the Address Space, it shall return the NodeId of a newly created entry in the Products folder here. If the server uses only method-based recipe management, this shall be null. Note that, even if the server uses the Products folder to expose product data in the Address Space, this may be null if the newly created product does not fit the selection criteria of the server for the entries in the Products folder. |

| transferRequired | In this argument, the server returns whether the vision system assumes that a transfer of the file content of the recipe is required. Note that this is only a hint for the client. If the server returns TRUE, the client will have to assume that the vision system needs the recipe content and shall transfer it. If the server returns FALSE, the client may transfer the recipe content anyway. |

| error | 0 – OK Values > 0 are reserved for errors defined by this and future standards. Values < 0 shall be used for application-specific errors. |

| Attribute | Value | ||||

| BrowseName | AddRecipe | ||||

| References | Node Class | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

7.5.2.1.2 New ExternalId

If AddRecipe is called with an ExternalId not yet existing in the recipe management of the vision system, it is expected that the vision system creates an appropriate management structure with an InternalId which is system-wide unique. The server may then return this InternalId, however the client cannot rely on this.

If the server chooses to represent all or selected recipes in the Address Space and if the new recipe matches the current selection critieria, the server shall create a new entry in the Recipes folder in the Address Space.

The method will return TRUE in the TransferRequired argument. Since the ExternalId does not yet exist in the recipe management of the vision system, it is expected that the recipe content does not yet exist either in the local recipe storage of the vision system, and therefore needs to be transferred.

If the ProductId argument is non-empty, it is expected that the vision system creates an appropriate management structure linking the newly created recipe for use with this ProductId. If the ProductId does not yet exist on the vision system, it is expected that it is created.

If the ProductId argument is empty, no such linking takes place. Note that it will not be possible to start a job based on a ProductId not linked to a recipe.

If the server chooses to represent all or selected products in the Address Space and if the ProductId matches the selection criteria, the server shall create a new entry in the Products folder in the Address Space.

If the server chooses to represent all or selected recipes in the Address Space and if the given recipe matches the selection criteria, the ProductId shall be added to the list of products within the appropriate Recipe node.

7.5.2.1.3 Identically Existing ExternalId with identical recipe

If AddRecipe is called with an ExternalId already existing in the recipe management of the vision system, it is expected that the vision system checks whether an identical version of the recipe already exists, provided that the content of the ExternalId allows for such a check (most likely using the hash value).

Note that the method has no way of checking this with the actual recipe content which is not yet known to the vision system.

The method will return FALSE in the TransferRequired argument if the system comes to the conclusion that the recipe already exists with identical content on the vision system. Note that the result is not binding for the client who may decide to transfer the recipe content anyway.

If the server represents recipes in the Address Space, no new entry shall be created in the recipes folder.

The behavior with regard to the ProductId argument is as described above for a new ExternalId. This way of calling AddRecipe can be used to link an existing recipe with another product.

7.5.2.1.4 Identically Existing ExternalId with different recipe

If AddRecipe comes to the conclusion that the content of the recipe to be transferred is different from the content already existing for this ExternalId, it shall return TRUE in the TransferRequired argument.

The behavior with respect to the management of the recipe metadata and recipe content is entirely application-defined. The vision system may decide to create a new management structure with a new InternalId and add the recipe content to the local recipe store, or it may decide to re-use the existing ExternalId and overwrite the recipe content.

If the server chooses to represent recipes in the Address Space, the behavior with respect to these recipe objects should mirror the behavior of the vision system in its internal recipe management

The behavior with regard to the ProductId argument is as described above for a new ExternalId. If the vision system stores both recipe versions, it is implementation-defined whether both are linked to the ProductId or not.

Note that overwriting a recipe shall result in a change to the internalId of the recipe. The change may effect only the hash value, the identifier may remain the same. Historical storage is not required.

7.5.2.1.5 Local creation or editing of recipes

This is not, strictly speaking, a use-case of the method AddRecipe, but results are comparable, therefore, the use-case is described here.

If a recipe is created locally on the vision system or is loaded onto the vision system by a different interface than the OPC Machine Vision interface, i.e. the recipe is added without using the AddRecipe method, then this recipe shall have a system-wide unique InternalId, just like a recipe added through the method.

If an existing recipe which was uploaded to the vision system through AddRecipe is locally changed, the ExternalId shall be removed from the changed version and it shall receive a new system-wide unique InternalId so that the two recipes cannot be confused. Of course the vision system may record the history from which recipe it was derived.

If the server represents recipes in the Address Space and if the locally created or edited recipes match the current filter criteria, then they shall be represented as nodes in the Recipes folder, with their system-wide unique InternalIds, but without ExternalIds.

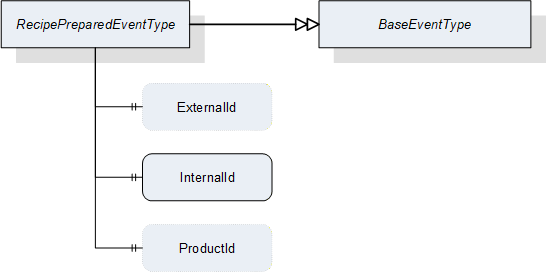

An important special case is the local editing of an already prepared recipe, described in Section B.1.2.3. Since after local editing, the already prepared recipe is different from before, effectively a new recipe has been prepared by the local editing. Therefore, a new RecipePrepared event shall be generated (see also Section 8.3.8.1).

7.5.2.2 PrepareRecipe

This method is used to prepare a recipe so that it can be used for starting a job on the vision system.

Signature

PrepareRecipe ([in] RecipeIdExternalDataType externalId[in] RecipeIdInternalDataType internalIdIn[out] RecipeIdInternalDataType internalIdOut[out] Boolean isCompleted[out] Int32 error);| Argument | Description |

| externalId | Identification of the recipe used by the environment which is to be prepared. |

| internalIdIn | Internal identification of the recipe which is to be prepared. The client can use either the externalId or the internalIdIn, leaving the other empty. If both are given, the InternalIdIn takes precedence. |

| internalIdOut | Internal identification of the recipe selected based on the given externalId or internalId. |

| isCompleted | Flag to indicate that the recipe has been completely prepared before the method returned. If False, the client needs either to check the properties of the recipe to determine when preparation has completed or wait for the RecipePrepared event. |

| Error | 0 – OK Values > 0 are reserved for errors defined by this and future standards. Values < 0 shall be used for application-specific errors. |

| Attribute | Value | ||||

| BrowseName | PrepareRecipe | ||||

| References | Node Class | BrowseName | DataType | TypeDefinition | ModellingRule |

|---|---|---|---|---|---|

| HasProperty | Variable | InputArguments | Argument[] | PropertyType | Mandatory |

| HasProperty | Variable | OutputArguments | Argument[] | PropertyType | Mandatory |

If the vision system is in state Initialized, it is expected to change into state Ready after successful preparation of the recipe and be able to execute jobs called by a Start method with the given ExternalId.

If the vision system is already in state Ready when PrepareRecipe is called, it is expected to be in state Ready again after successful preparation of the recipe and be able to execute jobs called by a Start method with the given ExternalId. Depending on the capabilities of the vision system, it may temporarily leave state Ready for state Initialized, then return to Ready, or, if the system is capable of preparing recipes in the background, it may stay in state Ready and react instantaneously to Start jobs for other, already prepared, recipes. Also depending on the capabilities of the vision system, preparing an additional recipe may unprepare others if the number of recipes being prepared at the same time is limited.

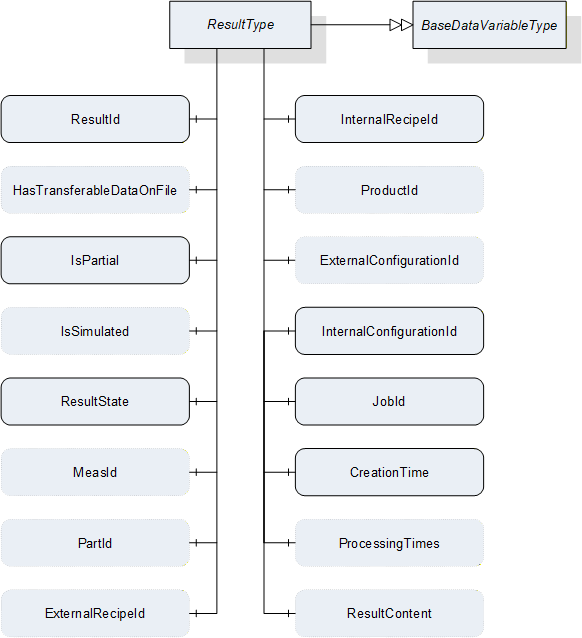

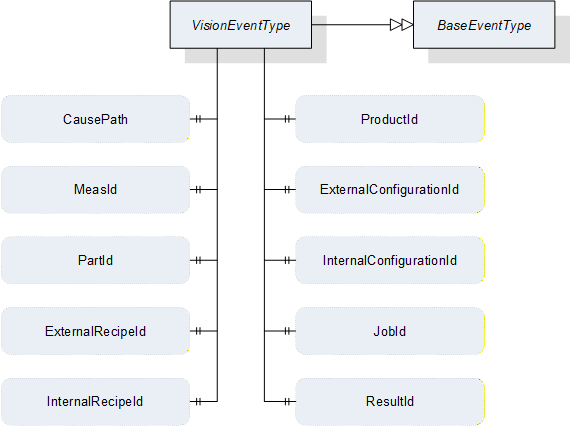

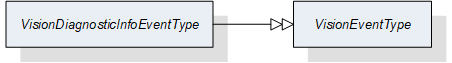

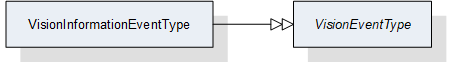

The preparation of a recipe may be a time-consuming operation. The client cannot necessarily assume that the recipe is completely prepared when the method returns. The client should therefore check the preparedness of the recipe after a reasonable amount of time or wait for a RecipePrepared event with the correct ExternalId to be fired. During the time required for preparing a recipe, the system may or may not be capable of reacting to a start method. However, the server is free to handle PrepareRecipe as a synchronous method, returning only after the recipe is completely prepared unless an error has occurred.