Machine vision systems are immensely diverse. This specification is based on a conceptual model of what constitutes a machine vision system’s functionality. Making good use of the specification requires an understanding of this conceptual model. It will be touched only briefly in this section, more details can be found in Annex B.

A machine vision system is any computer system, smart camera, vision sensor or even any other component that has the capability to record and process digital images or videostreams for the shop floor or other industrial markets, typically with the aim of extracting information from this data.

Digital images or video streams represent data in a general sense, comprising multiple spatial dimensions (e.g. 1D scanner lines, 2D camera images, 3D point clouds, image sequences, etc.) acquired by any kind of imaging technique (e.g. visible light, infrared, ultraviolet, x-ray, radar, ultrasonic, virtual imaging etc.).

With respect to a specific machine vision task, the output of a machine vision system can be raw or pre-processed images or any image-based measurements, inspection results, process control data, robot guidance data, etc.

Machine vision therefore covers a very broad range of systems as well as of applications.

System types range from small sensors and smart cameras to multi-computer setups with diverse sensoric equipment.

Applications include identification (like DataMatrix code, bar code or character recognition), pose determination (e.g. for robot guidance), assembly checks, gauging up to very high accuracy, surface inspection, color identification, etc.

In industrial production, a machine vision system is typically acting under the control and supervision of a machine control system, usually a PLC. There are many variations to this setup, depending on the type of product to be processed, e.g. individual parts or reel material, the organization of production etc.

A common situation in the production of individual work pieces is that a PLC informs the machine vision system about the arrival of a new part by sending a start signal, then waits until the machine vision system has answered with a result of some kind, e.g. a quality information (passed/failed), a measurement value (size), a position information (x- and y-coordinates, rotation, possibly z-coordinate, or full pose in the case of a 3D system), and then continues processing the work piece based on the information given by the vision system. Traditionally, the interfaces used for communication between a PLC and a machine vision system are digital I/O, the various types of field buses and industrial Ethernet systems on the market and also simply Ethernet for the transmission of bulk data.

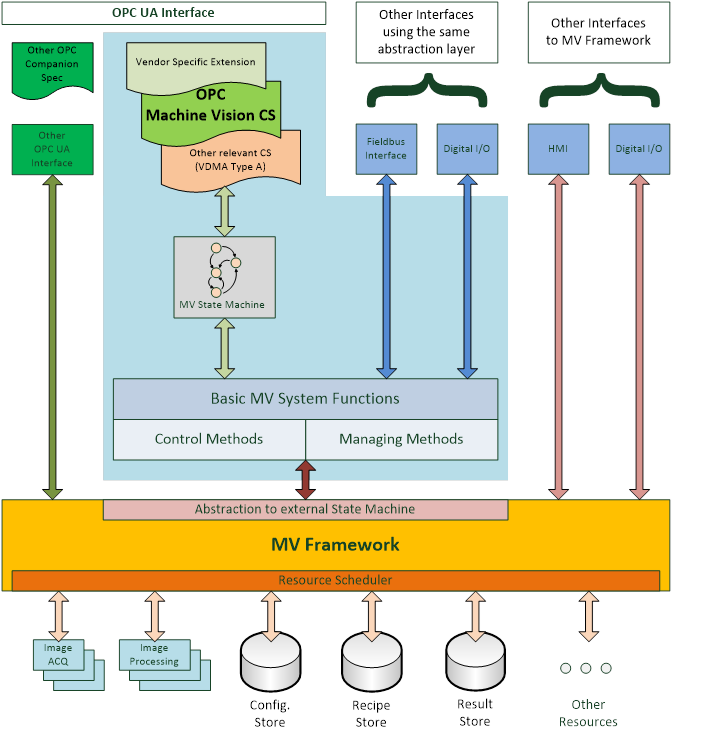

Figure 1 gives a generalized view on a machine vision system in the context of this companion specification. It assumes that there is some machine vision framework responsible for the acquisition and processing of the images. This framework is completely implementation specific to the system and is outside the scope of this companion specification.

This underlying system is currently presented to the “outside world”, e.g. the PLC, by various interfaces like digital I/O or field bus, typically using vendor specific protocol definitions. The interface described in this specification may co-exist with these interfaces and offer an additional view on the system or it may be used as the only interface to the system, depending on the requirements of the particular application.

The system may also be exposed through OPC UA interfaces according to other companion specifications, for example, DataMatrix code readers are by their nature machine vision systems but can also be exposed as systems adhering to the Auto ID specification. And system vendors can of course add their own OPC UA interfaces.

This companion specification provides a particular abstraction of a system envisioned to be running in an automated production environment where “automated” is meant in a very broad sense. A test bank for analyzing individual parts can be viewed as automated in that the press of a button by the operator starts the task of the machine vision system.

This abstraction may reflect the inner workings of the machine vision framework or it may be a layer on top of the framework presenting a view of it which is only loosely related to its interior construction.

The basic assumption of the model is that a machine vision system in a production environment goes through a sequence of states which are of interest to and may be influenced by the outside world.

Therefore, a core element of this companion specification is a state machine view of the machine vision system.

Also, a machine vision system may require information from the outside world, in addition to the information it gathers itself by image acquisition, e.g. information about the type of product to be processed. And it will typically pass information to the outside world, e.g. results from the processing.

Therefore, in addition to the state machine, a set of methods and data types is required to allow for this flow of information. Due to the diverse nature of machine vision systems and their applications, these data types will have to allow for vendor- and application-specific extensions.

The intention of the state machine, the methods, as well as the data types, is to provide a framework allowing for standardized integration of machine vision systems into automated production systems, and guidance for filling in the application-specific areas.

Of course, vendors will always be able to extend this specification and provide additional services according to the specific capabilities of their systems and the particular applications.